Most people who create a survey for the first time assume the hard part is distributing it. It isn’t. The hard part is designing it so respondents complete it honestly and give you data you can actually use.

A poorly structured survey doesn’t just hurt your response rate; it also undermines your data quality. It produces noise that appears to be a signal, leading to decisions built on shaky ground.

To create an online survey, you need to define your objective, choose the right question types for your goal, sequence questions from broad to specific, add skip-logic for relevance, and distribute it via email or in-app.

The 7 steps below cover each of these in order, with a first draft achievable in under 5 minutes using ProProfs Survey Maker’s AI.

Whether you’re building a customer satisfaction survey, an employee pulse check, or a market research survey, the same seven-step framework applies.

Let’s begin!

What Is an Online Survey and Why Does It Matter?

An online survey is a structured set of questions distributed digitally via email, website links, social media, or in-app prompts to collect targeted feedback or data from a specific audience. Respondents complete it at their convenience, and results are automatically aggregated for analysis.

Unlike face-to-face interviews or phone calls, online surveys scale without scaling cost. You can reach 50 respondents or 50,000 with the same design, and your data is captured, organized, and ready to analyze in real time.

Surveys are important in a way that they give you something conversations can’t: comparable, structured data at scale. A one-on-one customer conversation tells you one story. A well-designed survey tells you how common that story is.

The business case is direct. A Gartner study from 2023 shows that companies that analyze customers’ preferences, past purchases, and feedback see a substantial increase in average order value and a boost in loyalty.

Moreover, according to Forrester’s 2024 State of Customer Obsession Survey, customer-obsessed organizations (which typically act on customer insights) achieve 51% higher customer retention than non-customer-obsessed organizations. You learn what’s broken before customers leave to find something better.

How Do You Create an Online Survey? The 8-Step Framework

Here’s the survey-creation framework that works whether you’re running a 2-question NPS survey or a 15-question market research study. Step 1 gets you to a complete first draft in under 5 minutes. Steps 2 through 7 sharpen it into something that performs.

Step 1: How Do You Build a Survey With AI in Under 5 Minutes?

This is where most of the manual work disappears. Here’s what the actual build process looks like when you use ProProfs Survey Maker’s AI.

1. Open the AI Survey Maker and type something as simple as: “Customer onboarding feedback for SaaS. Focus on friction, value perception, and objections. Include NPS and open comments.”

If you already have a document that describes your goals, such as a previous survey, a training outline, a policy brief, or a product spec, you don’t even need to type a prompt.

ProProfs Survey Maker’s AI can read a PDF, DOCX, or TXT file you upload and generate the full survey from it directly. For HR teams with existing onboarding checklists, educators with course syllabi, or consultants with assessment frameworks, this eliminates the blank-page problem before it starts.

Try it for yourself:

Describe your survey and we'll create it for you

Within seconds, it generates a full survey. You get rating scales where they actually make sense, open-text questions where real detail is needed, and other question types placed where they fit the flow. The questions are neutral.

Watch the complete tutorial:

There’s no leading language or built-in bias. The structure feels logical because it’s drawn from a library of proven survey patterns, so it’s consistent and battle-tested across thousands of similar programs.

It also handles global teams automatically. The AI works in over 70 languages, so if your respondents are spread across markets, you’re not rebuilding the survey for each region.

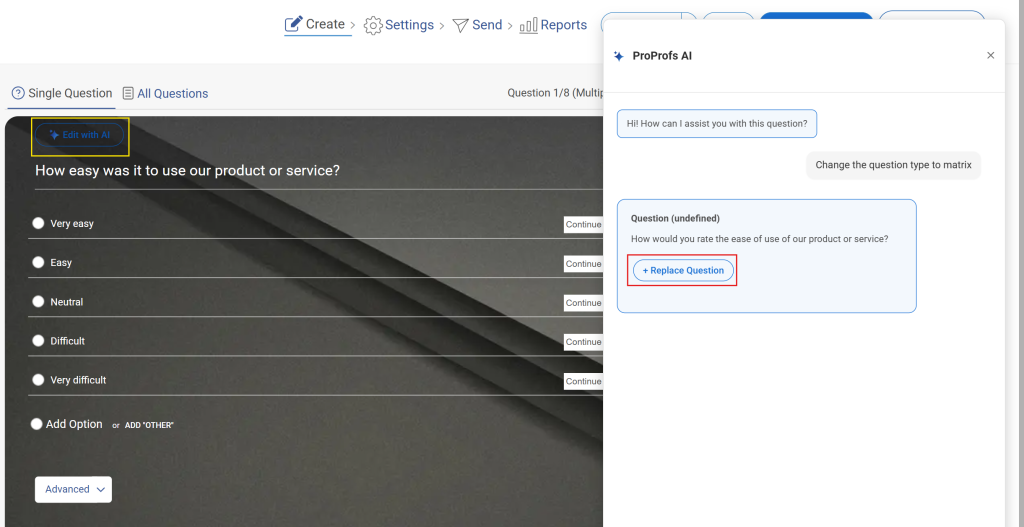

2. Then do a quick scan. Tweak your questions using AI to match your voice and reorder a question if needed.

3. Add branching where it makes sense. If someone gives a low NPS score, set a follow-up that asks why. The whole thing takes under 5 minutes from prompt to a survey that’s ready to send.

Here’s how you can add branching:

Once the questions are right, polishing and launching are just as quick.

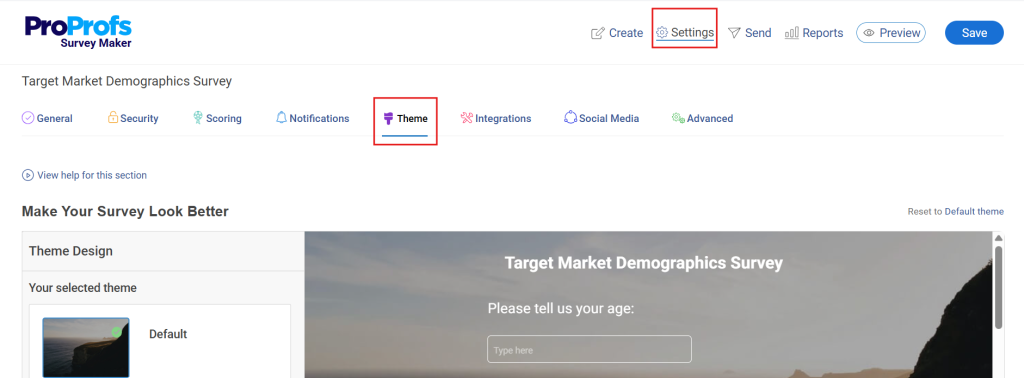

4. Add your logo, match the brand colors, and the survey looks professional without touching a design tool. Preview it on mobile, because that’s where most responses come from anyway.

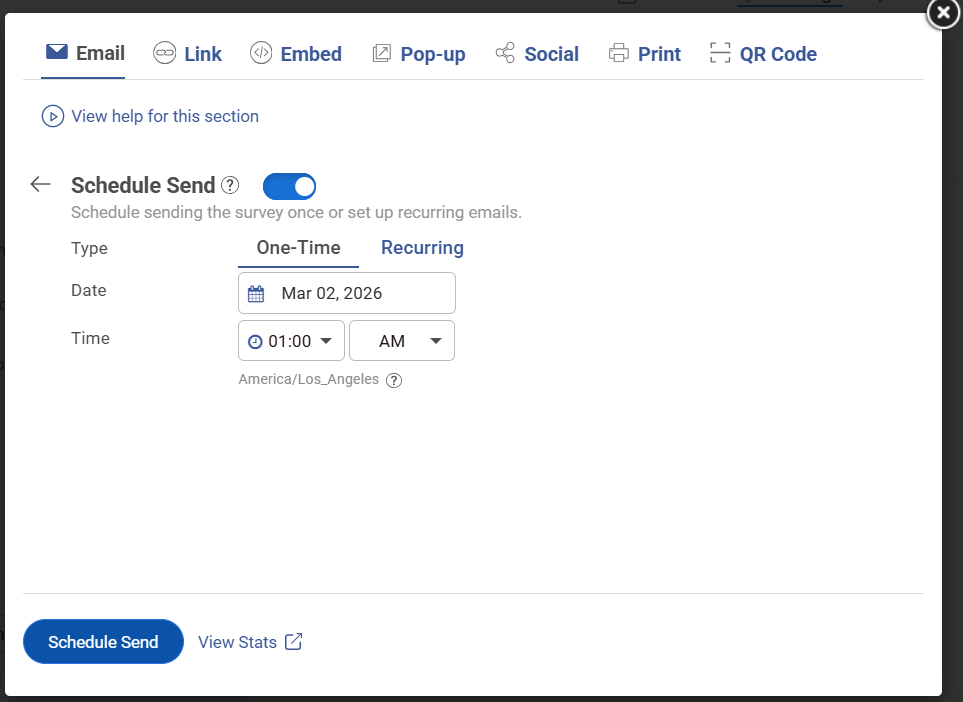

5. When it’s ready, send it however it fits. Embed it on the site, trigger a popup after a key action, email the link, or drop a QR code for an in-person or event context. Within minutes, you have a complete survey that’s clean, on-brand, and ready to collect.

If you need real-time signals between scheduled survey cycles, in-app micro-surveys complement your email program without replacing it.

Tools like Qualaroo capture feedback in-line during the product experience, without interrupting the user flow, so you’re not waiting 90 days for your next scheduled pulse to surface an issue.

Here’s how you can create an in-context survey:

Step 2: What Is the One Goal Your Survey Needs to Answer?

Before you finalize anything the AI generated, confirm you have a single clear objective: “By the end of this survey, I will know ___.”

If you can’t complete that sentence, you’re not ready to publish. Surveys without a defined objective collect data nobody uses. They also tend to run long, because every team member adds their own “nice to know” questions, and nobody has a principled reason to cut them.

One survey. One objective. Every question that doesn’t directly serve that objective gets cut before you launch.

Common survey objectives, mapped to survey type and question, count:

| Objective | Ideal Question Count | Survey Type |

|---|---|---|

| Measure Post-Purchase Satisfaction | 3 to 5 | CSAT |

| Track Customer Loyalty Over Time | 1 to 3 | NPS |

| Understand Why Employees Are Disengaged | 10-15 | Employee Engagement |

| Qualify a New Market Segment | 5 to 8 | Market Research |

| Assess Training Effectiveness | 5 to 10 | Educational / Scored |

| Collect Event Feedback | Less than/equals 6 | Event Survey |

Ranges based on industry benchmarks from sources like PubMed, AIHR, CustomerGauge, Pylon, Attest, Docebo, and Cvent.

Step 3: Who Is Your Target Audience?

An HR manager running a pulse check for a factory floor workforce needs a completely different survey design than one running it for a remote software team.

The first group needs short, plain-language questions, a mobile-friendly layout, and a fast completion window. The second can handle more nuance, longer Likert scales, and open-ended follow-ups.

Audience definition shapes your language, your question types, and the channels you use to distribute.

| Audience Type | Best Distribution Channel | Example Question Style |

|---|---|---|

| Customers (Post-Purchase) | Email or in-app popup | Short rating + one open-ended |

| Employees (Internal) | Company email or internal tool | Likert scale + anonymous option |

| Event Attendees | Post-event email or QR code | Rating + open-ended per session |

| Research Panel / Market Audience | Email or survey link | Multiple choice + open-ended |

| Lead Qualification / Prospect | Embedded on the landing page | Scored multiple-choice |

Data is drawn from sources such as the Yotpo Post-Purchase Survey Guide, Drive Research (anonymous employee surveys), Gallup Workplace Employee Surveys, Formbricks Post-Event Survey Guide, Nielsen Norman Group Survey Best Practices, and various CX, HR, event management, and market research guides (2024–2026).

Step 4: How Do You Choose the Right Survey Questions?

Question selection is where most surveys go wrong. The goal is to ask exactly what you need to know, in the format that makes it easiest for respondents to answer accurately.

The main question types and when to use each:

| Question Type | Best For | Avoid When |

|---|---|---|

| Multiple Choice (single) | Categorical data, easy decisions | You need to allow more than one answer |

| Multiple Choice (multi-select) | Preference lists, feature usage | You need clean comparative data |

| Rating Scale (1 to 5 or 1 to 10) | Satisfaction, importance ranking | You want directional open insight |

| NPS Scale (0 to 10) | Customer loyalty measurement | You only want satisfaction, not advocacy |

| Likert Scale | Attitude or agreement measurement | Audiences unfamiliar with the format |

| Open-Ended Text | "Why" behind a score, qualitative depth | Short surveys where analysis time is limited |

| Dichotomous (Yes/No) | Screener questions, eligibility | You need nuance or a degree |

| Ranking | Priority ordering across options | More than 5 or 6 items to rank |

If you’re unsure which question type fits a particular point in your survey, ProProfs Survey Maker’s AI-powered question suggestions surface relevant options based on your stated survey goal as you build. You’re not choosing blindly from a list of 20 types.

One practical rule that applies regardless of type: keep each question focused on a single idea. “How satisfied are you with our product quality and pricing?” is two questions forced into one.

If the pricing is poor but the quality is excellent, the respondent is stuck. Their answer becomes noise.

Ready-to-use question examples by survey type:

Customer Satisfaction Survey

- How satisfied are you with your recent experience? (1 to 5 scale)

- What was the most valuable part of your experience?

- How likely are you to return? (Very Likely / Likely / Unlikely / Very Unlikely)

- What could we improve?

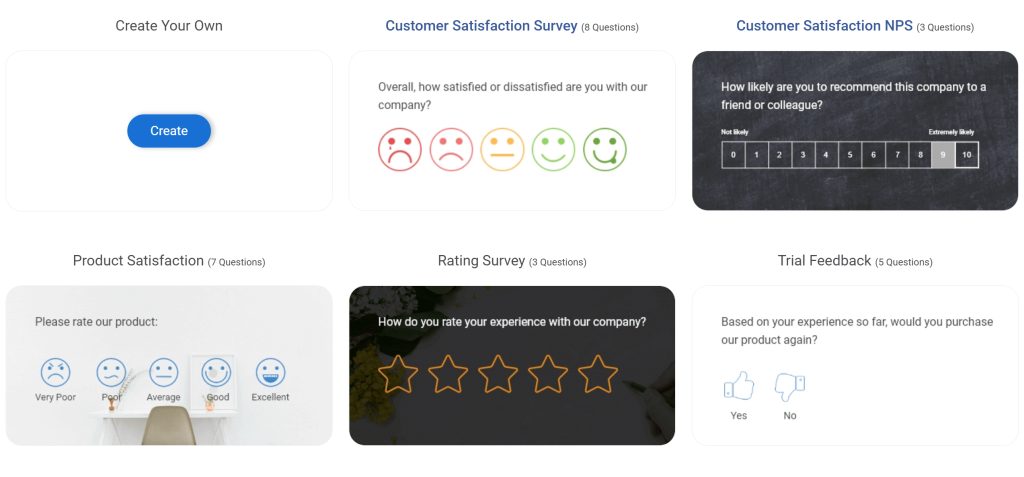

Here are a few CSAT templates:

Employee Engagement Survey

- How clearly do you understand your role and responsibilities? (1 to 5)

- Do you feel your work is recognized and appreciated? (Yes / No / Sometimes)

- What is one thing leadership could do to improve your day-to-day experience?

- How likely are you to recommend this company as a place to work? (NPS scale)

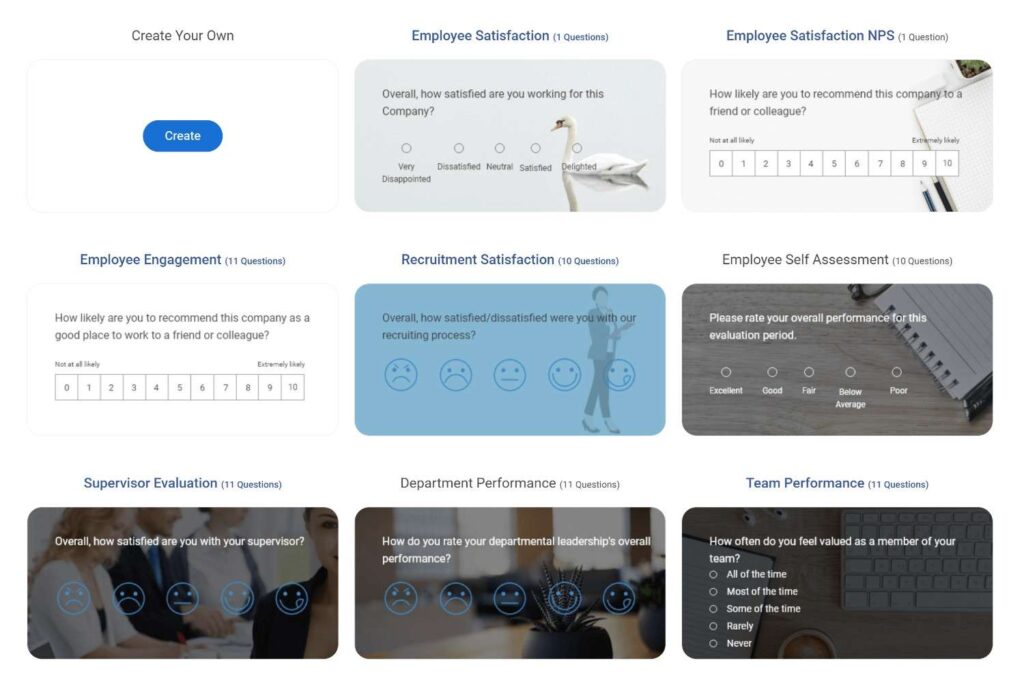

Here are a few Employee Engagement templates:

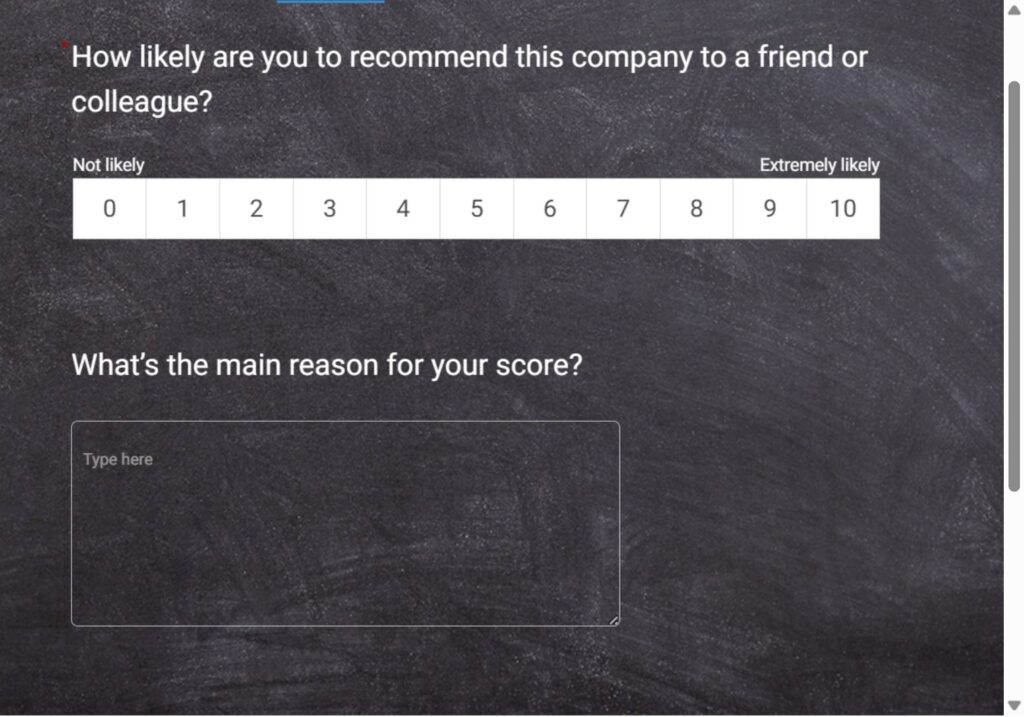

NPS Survey

- On a scale of 0 to 10, how likely are you to recommend [Company] to a friend or colleague?

- What is the main reason for your score?

Here’s an NPS survey template for you:

Step 5: How Should You Structure and Sequence Your Questions?

Question order affects how people answer. A well-sequenced survey builds momentum from easier, broader questions toward more specific or sensitive ones.

The general rule: broad before narrow, impersonal before personal, behavioral before attitudinal.

- Start with one or two easy, engaging questions to reduce early drop-off

- Group related questions together so the respondent isn’t context-switching constantly

- Place open-ended questions after closed-ended ones where possible, not at the start

- Put demographic questions last. Opening with age, income, or job title raises friction before you’ve earned the respondent’s trust

Step 6: How Do You Add Depth With Open-Ended Comment Fields?

Ratings and scales tell you what. Open-ended fields tell you why.

A 3-out-of-5 satisfaction score is useful as a trend metric. But if you add “What’s the main reason for your score?” as a follow-up, you get the context that turns a number into an action.

Use one open-ended question per major topic. More than that, completion rates drop as respondents face a wall of text fields.

For NPS programs and scored surveys, attach a single open-ended follow-up to the score question. That pairing consistently produces the highest-value data.

Step 7: How Do You Distribute and Run Surveys on a Regular Cadence?

A single survey is a data point. A recurring survey program is a trend line.

The most useful customer and employee data comes from running the same core questions at consistent intervals.

An NPS survey sent quarterly gives you four comparable data points per year. An employee engagement pulse sent every six months tells you whether changes in management or policy actually moved the needle.

Send your survey on Tuesday between 6 AM and 9 AM if you’re targeting a US business audience. One well-timed follow-up reminder, sent 3 to 7 days after the initial invite, can increase response rate by up to 14%. You can check this guide for complete guidance.

How Do You Design a High-Response Survey? A Practical Framework

Before distributing your survey, score it against these five criteria.

What Are the Most Common Survey Design Mistakes to Avoid?

This is where most survey programs quietly fail. The data looks normal. The completion rate seems fine. But the insights are corrupted because the survey design introduced systematic errors.

Leading Questions: Are You Steering Respondents to the Answer You Want?

A leading question nudges the respondent toward a specific answer. “How much did you enjoy our onboarding experience?” assumes enjoyment. “How would you describe your onboarding experience?” does not.

Leading questions produce artificially positive data. You’ll see great satisfaction scores right up until customers stop renewing, and you won’t see it coming.

Double-Barreled Questions: Are You Asking Two Things at Once?

“How satisfied are you with our product quality and customer service?” is two questions.

If the respondent loves the product but had a poor service experience, there’s no accurate answer available. They pick the closest option, and your data is skewed.

Rule: one question, one idea. Always.

Survey Fatigue: Are You Asking Too Much?

Survey fatigue is documented and measurable. Research from the Journal of Caring Sciences (2024) identifies it as a primary source of careless responses and survey attrition.

The symptoms: respondents speed through later questions, select neutral options in bulk, leave open-ended fields blank, or abandon the survey entirely.

The threshold is lower than most people assume. Research from Milieu Insight found that neutral “no opinion” responses increased sharply in surveys with more than 50 questions.

But quality starts degrading earlier, well before you hit that mark. Keep it short. Cut every “nice to know” question that isn’t essential to your objective.

Poor Question Sequencing: Does Your Survey Flow Logically?

Respondents shouldn’t have to mentally switch contexts every few questions. Group-related questions. Don’t ask about product quality, then jump to demographic details, then return to product questions.

A common sequencing error is placing screener questions too late. If you’re surveying customers who made a purchase in the last 30 days, that screener belongs at the start, not question 8.

Not Communicating Anonymity: Do Respondents Know Their Answers Are Safe?

This one is especially damaging in employee surveys, exit interviews, and other contexts where respondents might fear judgment or repercussions.

If people aren’t confident that their responses are anonymous, they self-censor. They pick the safest answer, not the honest one. Your satisfaction scores look fine. Your engagement data looks stable. And none of it reflects what people actually think.

The fix is simple but frequently skipped: state clearly at the start of the survey whether responses are anonymous, confidential, or attributed.

If they’re anonymous, say so explicitly and explain what that means in practice. “Your responses are anonymous and cannot be linked back to you” does more for data quality than any question design tweak.

Here’s how you can make your survey anonymous:

Inadequate Answer Options: Are Your Answer Choices Complete?

Closed-ended questions with incomplete answer sets force respondents to pick the least-wrong option.

Always include an “Other (please specify)” option for multiple-choice questions where your list isn’t exhaustive.

For satisfaction or frequency scales, include a “Not applicable” option where relevant, so respondents aren’t forced to answer questions that genuinely don’t apply to them.

FREE. All Features. FOREVER!

Try our Forever FREE account with all premium features!

What Do Real Businesses Learn From Running Surveys?

Justin Fredericks at Harvard Innovation Lab reported that ProProfs Survey Maker’s instant analytics and reporting gave their team detailed insights into their target audience.

This enabled better-informed market research decisions at one of the world’s most competitive innovation environments.

Helena Romero, HR Specialist at College Hospital Cerritos, used ProProfs surveys to achieve 100% compliance in a shorter time period.

What previously required manual tracking, follow-up, and chasing individuals through email was replaced by an automated survey program that tracked completion in real time.

At the California Department of Public Health, the result was operational: ProProfs saves a lot of time in issuing certificates and tracking.

A high-volume certification workflow that depended on paper records and manual verification moved to a digital survey system that handled data collection, tracking, and reporting in one place.

The pattern is consistent across all three: surveys that are short, targeted, regularly run, and connected to a system that acts on the data produce results.

The tool matters, but the discipline of running a feedback program matters more.

How Can a Tool Make Creating Surveys Easier?

If your current process involves starting from a blank document, rebuilding question sets for every new program, or manually collating responses from a spreadsheet, you’re spending time on infrastructure that should be automated.

A tool like ProProfs Survey Maker handles the full workflow:

- AI-powered survey generation from a plain-language prompt or an uploaded document

- 100+ professionally designed templates

- 1,000,000+ ready-to-use questions across every industry and use case

- Skip logic and conditional branching, white labeling

- Multilingual support across 70+ languages, and a real-time reporting dashboard.

The Forever Free plan includes all premium features with no credit card required, up to 50 responses per survey, and 500 email sends.

For small teams, occasional programs, or anyone evaluating before committing, it’s a zero-risk starting point.

Create a Survey Today!

You now have the full framework: how to build a first draft in under 5 minutes with AI, how to pick the right question types for your goal, how to use skip logic so every respondent sees only what’s relevant, and how to spot the design mistakes that corrupt data before it reaches you.

The next step is to run the survey, review the data, and repeat it next quarter with the same core questions.

That’s when a feedback form becomes a feedback system, and a feedback system is what actually changes decisions.

Get started free with ProProfs Survey Maker. Create your first survey in under 5 minutes with AI.

Frequently Asked Questions

What is the best question type for measuring customer satisfaction?

A rating scale (1 to 5 stars or a satisfaction scale) is most effective for measuring satisfaction because it produces quantifiable, comparable data over time. Follow the rating with a single open-ended question asking for the reason behind the score. This pairing gives you both the metric and the context.

How do I improve my survey response rate?

The three highest-impact actions are: keep the survey under 5 minutes, personalize the invitation, and send a single follow-up reminder 3 to 7 days after the original. A 2021 PMC Study shows that personalized invitations can increase response rates by 8.6% points or more. A single reminder can add up to 14 percentage points to your response rate.

What is skip logic in a survey, and why does it matter?

Skip logic is a feature that automatically shows or hides questions based on a respondent's previous answers. It keeps the survey relevant to each individual respondent, which increases both completion rates and data quality. Surveys using skip logic typically see fewer drop-offs than linear surveys.

What is the difference between a survey response rate and a completion rate?

Response rate is the percentage of invited people who answered at least one question. Completion rate is the percentage of people who started the survey and finished it.

How do you avoid leading questions in a survey?

Write questions in neutral language that do not suggest the desired answer. Compare "How much did you enjoy working with our support team?" (leading) to "How would you describe your experience with our support team?" (neutral). Before publishing, have someone outside your team read each question and flag any that feel directional.

What is a good online survey for employee engagement?

An effective employee engagement survey includes Likert-scale questions covering manager effectiveness, role clarity, recognition, and intent to stay. The ideal length is 10 to 15 questions, distributed quarterly or biannually, with enough open-ended follow-up to capture the reasons behind low scores. Use the same core questions each cycle to track trend data over time.

Can I create an online survey for free?

Yes. ProProfs Survey Maker's Forever-Free plan includes all premium features, unlimited surveys, up to 50 responses per survey, and 500 email sends, with no credit card required. This is sufficient for most small-team programs, one-off studies, or anyone who wants to evaluate the platform before committing to a paid plan.

FREE. All Features. FOREVER!

Try our Forever FREE account with all premium features!

We'd love your feedback!

We'd love your feedback!

Thanks for your feedback!

Thanks for your feedback!